NVIDIA A 27% surge in May brought its market capitalization to $2.7 trillion. Microsoft and apple The chipmaker, one of the world’s most valuable publicly traded companies, reported three consecutive quarters of triple year-over-year sales thanks to surging demand for its artificial intelligence processors.

Mizuho Securities estimates that Nvidia holds 70% to 95% of the market for AI chips used to train and deploy models like OpenAI’s GPT. Nvidia’s pricing power is evident in its gross margins of 78%, surprisingly high for a hardware company that has to manufacture and ship physical products.

Rival semiconductor manufacturers Intel and Advanced Micro Devices Gross margins for the most recent quarters were 41% and 47%, respectively.

Nvidia’s position in the AI chip market has been described by some experts as a “moat”: the combination of its AI graphics processing units (GPUs) such as its flagship H100 and CUDA software gives it a huge advantage over competitors, making switching to an alternative seem almost unthinkable.

Still, Nvidia CEO Jensen Huang, whose net worth has ballooned from $3 billion to about $90 billion in the past five years, said he is “worried and concerned” that the 31-year-old company is losing its edge. At a conference late last year, Mr. Huang acknowledged that many strong competitors have emerged.

“I don’t think people are trying to put me out of business,” Huang said. Said in November. “That’s different because we know that’s what they’re probably going to do.”

Nvidia has promised to release New AI chip architectures every yearThe company will now focus on releasing new software annually, instead of every other year in the past, that will allow the chips to be more deeply integrated into AI software.

But Nvidia’s GPUs aren’t the only ones capable of carrying out the complex calculations that underpin generative AI, and given that less-powerful chips can do the same work, Huang is understandably skeptical.

The move from training AI models to what’s known as inference — deploying the models — could also give companies an opportunity to replace Nvidia’s GPUs, especially if they’re cheaper to buy and operate. With Nvidia’s flagship chips costing roughly $30,000 and up, there’s plenty of incentive for customers to look for alternatives.

“NVIDIA wants 100% of the market share, but customers don’t want NVIDIA to have 100% of the market share,” said Sid Sheth, co-founder of aspiring rival D-Matrix. “It’s just too big an opportunity. It’s just too unhealthy for one company to have everything.”

Founded in 2019, D-Matrix plans to release a server-oriented semiconductor card later this year that aims to reduce the cost and latency of running AI models. raised $110 million in September.

In addition to D-Matrix, a wide range of companies, from multinational corporations to startups, are vying for a share of the AI chip market, which could reach $400 billion in annual sales over the next five years, according to market analysts and AMD. Nvidia has generated about $80 billion in revenue over the past four quarters, and Bank of America estimates it sold $34.5 billion of AI chips last year.

Many of the companies jumping on board with Nvidia’s GPUs are convinced that different architectures and specific tradeoffs could produce better chips for specific tasks.Device makers are also developing technologies that could eventually do much of the AI computing that’s currently done on large GPU-based clusters in the cloud.

“Today, no one can deny that NVIDIA is the hardware of choice for training and running AI models,” said Fernando Vidal, co-founder of 3Fourteen Research. He told CNBC“But we’ve seen incremental progress in leveling the playing field, from hyperscalers developing their own chips to smaller startups designing their own silicon.”

AMD CEO Lisa Su wants investors to believe there’s plenty of room for many companies to succeed in this space.

“The key is to have a lot of options,” Su told reporters in December as the company unveiled its latest AI chip. “I think we’ll get to a point where there’s not just one solution, but multiple solutions.”

Other major semiconductor manufacturers

Lisa Su showed off the AMD Instinct MI300 chip during a keynote address at CES 2023 in Las Vegas, Nevada on January 4, 2023.

David Becker | Getty Images

AMD makes gaming GPUs and, like Nvidia, is adapting them for AI in the data center. Their flagship chip is the Instinct MI300X. Microsoft has already bought AMD processors and is making them accessible through its Azure cloud.

During the announcement, Su emphasized that the chip is not competing with Nvidia in training, but rather excels in inference.Last week, Microsoft said it was using AMD Instinct GPUs in its Copilot models.Morgan Stanley analysts took the news as a sign that AMD’s AI chip sales this year could exceed the company’s stated target of $4 billion.

Intel, which was overtaken by NVIDIA in terms of revenue last year, is also looking to establish a presence in the AI space. The company recently announced the third version of its AI accelerator, Gaudi 3. This time, Intel compared it directly to the competition, describing it as a more cost-effective alternative, outperforming NVIDIA’s H100 in terms of performing inference and training models faster.

Bank of America analysts recently predicted that Intel will capture less than 1% of the AI chip market this year, and the company says it has a $2 billion backlog of orders for the chips.

The main obstacle to wider adoption may be software. Both AMD and Intel have UXL Foundationwhich includes GoogleIt is working on creating a free alternative to Nvidia’s CUDA for controlling hardware for AI applications.

Nvidia’s top customers

One potential challenge for Nvidia is competing with its biggest customers: Google, Microsoft, and Amazon All of them manufacture processors for their own internal use. In addition to the big tech trio, Oracleaccounts for more than 40% of Nvidia’s revenue.

Amazon introduced its own AI-focused chips in 2018, branded Inferentia, which is currently in version 2. In 2021, Amazon Web Services introduced Tranium, targeted at training. Customers can’t buy the chips, but can rent the systems through AWS, which the company touts as more cost-effective than Nvidia’s chips.

Google is perhaps the cloud provider most committed to its own silicon: The company has been using what it calls Tensor Processing Units (TPUs) to train and deploy AI models since 2015. In May, it unveiled Trillium, the sixth version of the chip that it says is being used to develop models such as Gemini and Imagen.

Google also uses Nvidia chips and provides them through its cloud.

Microsoft isn’t quite there yet. I said last year The company said it is developing its own AI accelerators and processors, called Maia and Cobalt.

Meta While Facebook isn’t a cloud provider, the company needs vast amounts of computing power to run its software, websites and serve ads. Facebook’s parent company has bought billions of dollars’ worth of Nvidia processors, and said in April that some of its homegrown chips are already in its data centers, offering “greater efficiency” compared to GPUs.

JPMorgan analysts estimated in May that the market for custom chip manufacturing for large cloud providers could be worth up to $30 billion and is expected to grow 20% annually.

Startups

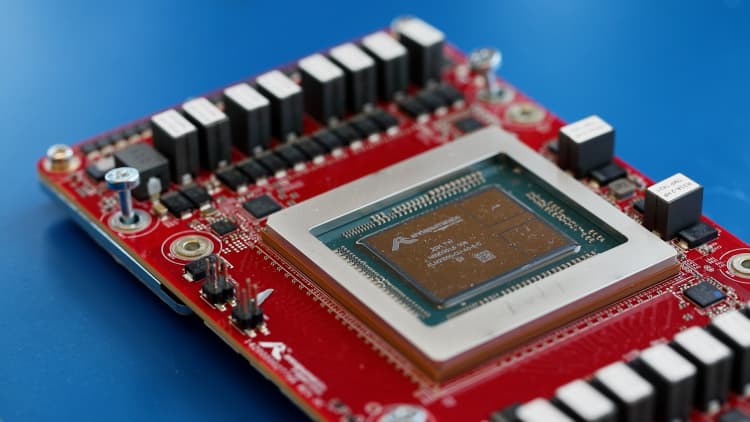

Cerebras’ WSE-3 chip is an example of new silicon from startups designed to run and train artificial intelligence.

Celebras Systems

Venture capitalists see an opportunity for startups to get into the space: They’re investing $6 billion in AI semiconductor companies in 2023, up slightly from $5.7 billion the year before, according to Pitchbook data.

Semiconductors are a tough space for startups because they are expensive to design, develop and manufacture, but they also offer an opportunity for differentiation.

Silicon Valley AI chipmaker Cerebras Systems, which focuses on the fundamental operations and bottlenecks of AI rather than the more general-purpose properties of GPUs, was founded in 2015 and was valued at $4 billion in a recent round of funding, according to Bloomberg.

Cerebras’ chip, the WSE-2, combines GPU capabilities as well as central processing and additional memory into a single device, making it suitable for training large models, CEO Andrew Feldman said.

“We have big chips and they have lots of little chips,” Feldman said. “They have a hard time moving data around, and we don’t.”

Feldman is the president of the company, which owns the Mayo Clinic. GlaxoSmithKlineand the U.S. military as a customer, is competing with Nvidia to win supercomputing systems business.

“There’s enough competition, and I think that’s healthy for the ecosystem,” Feldman said.

D-Matrix’s Sheth said the company plans to release cards later this year with chiplets that enable more computation in memory rather than on a chip like a GPU. D-Matrix’s product can be inserted into AI servers alongside existing GPUs, reducing work for the Nvidia chips and helping to reduce the cost of generative AI.

Customers are “very open and eager to bring new solutions to the market,” Sheth said.

Apple and Qualcomm

Apple iPhone 15 series devices are displayed for sale at The Grove Apple retail store in Los Angeles, California on their launch date of September 22, 2023.

Patrick T. Fallon | AFP | Getty Images

The biggest threat to Nvidia’s data center business may be a shift in where processing happens.

Developers are becoming convinced that AI work will move from server farms to the laptops, PCs, and phones we own.

Large models like those developed by OpenAI require large clusters of powerful GPUs for inference, but companies like Apple and Microsoft are developing “mini models” that require less power and data and can run on battery-powered devices. They may not perform as well as the latest version of ChatGPT, but they can be used for other purposes, such as text summarization and visual search.

Apple and Qualcomm have been updating their chips to run AI more efficiently, adding a section dedicated to AI models called the neural processor, which offers privacy and speed benefits.

Qualcomm recently announced PC chips that will enable laptops to run Microsoft AI services, and the company is also investing in several chipmakers that make low-power processors that can run AI algorithms outside of smartphones and laptops.

Apple is pitching its latest laptops and tablets as AI-optimized, thanks to the neural engines built into its chips. At its upcoming developers conference, Apple said: The company is expected to show off a suite of new AI features that will likely run on the silicon in its iPhones.