Dozens of technology leaders, including Elon Musk, have called on AI labs to pause development of systems that can compete with human-level intelligence.

and open letter From the Future of Life Institute signed by Musk, apple Co-founder Steve Wozniak and 2020 presidential candidate Andrew Yang are helping the AI Lab train models that are more powerful than GPT-4, the latest version of large-scale language modeling software developed by US startup OpenAI. I asked it to stop.

Related investment news

“Modern AI systems are now beginning to compete with humans in common tasks. We have to ask ourselves: Are machines flooding our information channels with propaganda and lies? Shouldn’t it?” Read the letter.

“Should we automate all work, including fulfillment work? Should we develop non-human minds that may eventually outnumber, become obsolete, and replace us? ?Should we risk losing control of our civilization?”

“Such decisions shall not be delegated to unelected technical leaders,” the letter adds.

The Future of Life Institute is a non-profit organization based in Cambridge, Massachusetts that promotes the responsible and ethical development of artificial intelligence. Its founders include MIT cosmologist Max Tegmark and Skype co-founder Jaan Tallinn.

The lab previously had Musk, Google-owned AI lab DeepMind and others pledge not to develop lethal autonomous weapon systems.

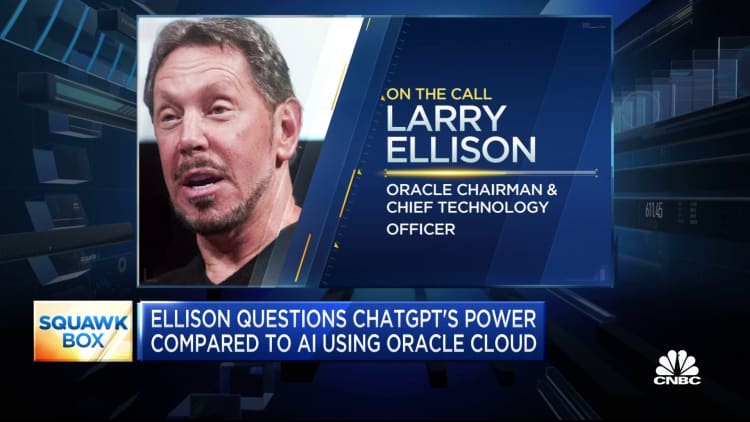

The institute said it is asking all AI labs to “immediately suspend training of AI systems stronger than GPT-4 for at least six months.”

Released earlier this month, GPT-4 is believed to be much more advanced than its predecessor, GPT-3.

“If such a moratorium cannot be enacted quickly, the government must step in and set a moratorium,” it added.

ChatGPT, a viral AI chatbot, wowed researchers with its ability to generate human-like responses to user prompts. By January, ChatGPT had become the fastest growing consumer application of all time, with his 100 million monthly active users just two months after launching.

The technology has been trained on vast amounts of data from the internet and has been used to create everything from poetry in the style of William Shakespeare to drafting legal opinions on court cases.

But AI ethicists have also raised concerns about the technology’s potential for abuse, including plagiarism and misinformation.

Musk has previously said he believes AI is one of the “biggest risks” to civilization.

The CEO of Tesla and SpaceX, who is also one of the co-founders of OpenAI, left OpenAI’s board of directors in 2018 and holds no stake in the company.

He has criticized the organization many times recently, believing that it has deviated from its original purpose.

As technology advances at a rapid pace, regulators are also racing to handle AI tools. On Wednesday, the UK government issued a white paper on AI, entrusting various regulators to apply existing legislation and oversee the use of AI tools in their respective sectors.